Microsoft Azure

All the different ways to authenticate to Azure SQL, Synapse, and Fabric

In this post I’ll go over all the details on acquiring access tokens to authenticate to any Microsoft SQL engine, including Azure SQL, Azure Synapse and Microsoft Fabric. We’ll explore users, service principals, managed identities, and Fabric Workspace Identity. Why would you want to do this? Well, if you’re looking to get programmatic access to your database / data warehouse, you’ll need to authenticate. In 2025, more often than not, you’ll be using Microsoft Entra ID to do so and this is where the fun begins.

Is Microsoft Fabric just a rebranding?

It’s a question I see popping up every now and then. Is Microsoft Fabric just a rebranding of existing Azure services like Synapse, Data Factory, Event Hub, Stream Analytics, etc.? Is it something more? Or is it something entirely new? I hate clickbait titles as much as you do. So, before we dive in, let me answer the question right away. No, Fabric is not just a rebranding. I would not even describe Fabric as an evolution (as Microsoft often does), but rather as a revolution! Now, let’s find out why.

Migrating Azure Synapse Dedicated SQL to Microsoft Fabric

If all those posts about Microsoft Fabric have made you excited, you might want to consider it as your next data platform. Since it is very new, not all features are available yet and most are still in preview. You could already adopt it, but if you want to deploy this to a production scenario, you’ll want to wait a bit longer. In the meantime, you can already start preparing for the migration. Let’s dive into the steps to migrate to Microsoft Fabric. Today: starting from Synapse Dedicated SQL Pools.

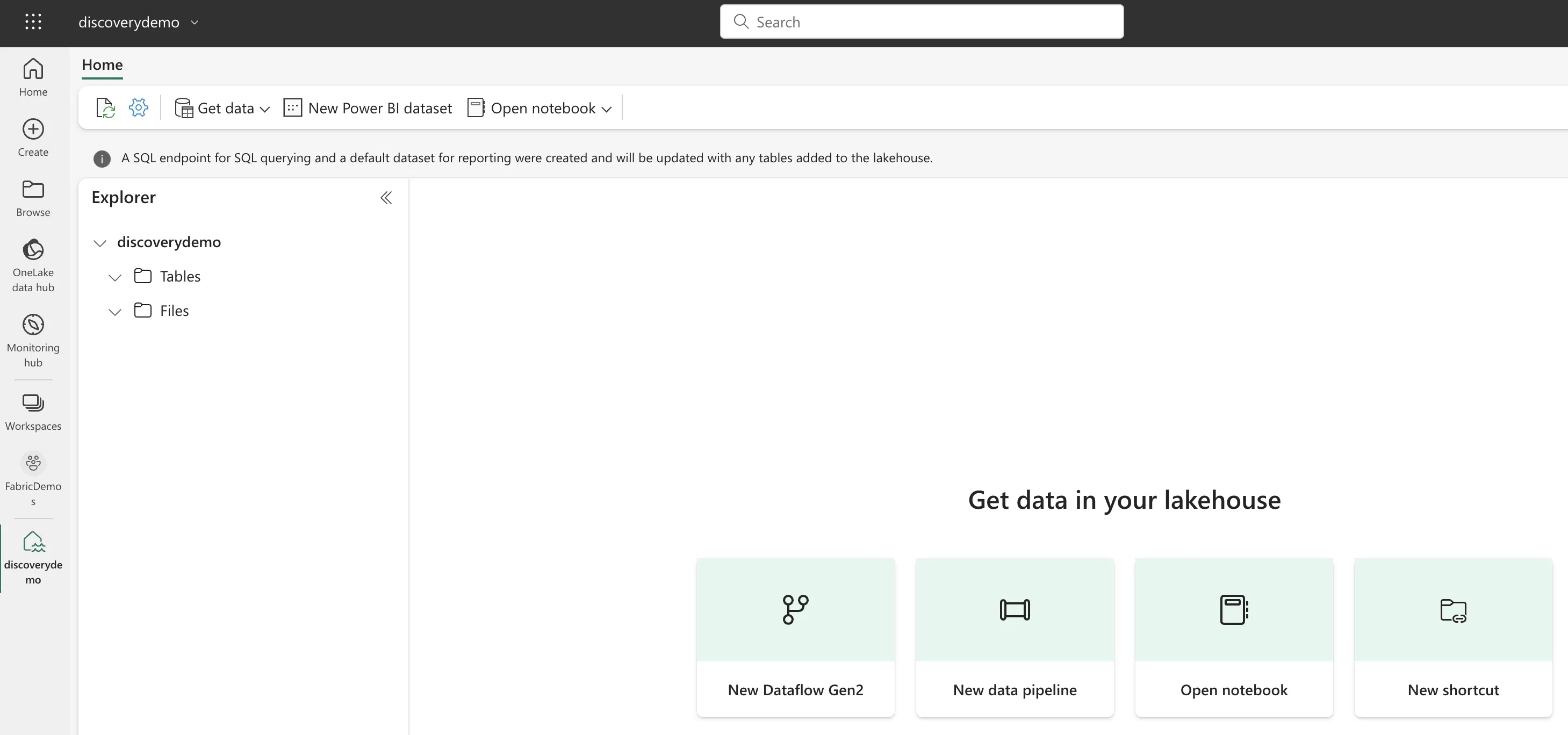

Microsoft Fabric's Auto Discovery: a closer look

In previous posts , I dug deeper into Microsoft Fabric’s SQL-based features and we even explored OneLake using Azure Storage Explorer . In this post, I’ll take a closer look at Fabric’s auto-discovery feature using Shortcuts. Auto-discovery, what’s that? Fabric’s Lakehouses can automatically discover all the datasets already present in your data lake and expose these as tables in Lakehouses (and Warehouses). Cool, right? At the time of writing, there is a single condition: the tables must be stored in the Delta Lake format. Let’s take a closer look.

Exploring OneLake with Microsoft Azure Storage Explorer

Recap: OneLake & Delta Lake One of the coolest things about Microsoft Fabric is that it nicely decouples storage and compute and it is very transparent about the storage: everything ends up in the OneLake. This is a huge advantage over other data platforms since you don’t have to worry about moving data around, it is always available, wherever you need it.

Filling the gaps in your code with the Terraform azapi provider for Azure

The cloud is just someone else’s computer and to manage that we prefer to use Infrastructure as Code (IaC). dataroots believes that IaC can benefit any team working with cloud resources and most often Terraform is our tool of choice there. As a data & cloud engineer focusing on Microsoft Azure, that is true for me as well. However, there have been a couple of hick-ups along the road.

Some interesting takeaways from this year's Techorama

Last week was a busy week for fans of the Microsoft technology stack like myself. Microsoft hosted its yearly developer conference, Microsoft Build, announcing lots of exciting updates to new and existing Azure services. In the meantime, the Belgian community of Microsoft technology users gathered in Kinepolis Antwerp for this year’s edition of Techorama.

Installing the Azure Event Hubs Python SDK on Raspberry Pi OS 64-bit

Since we’re going through some heat waves in Europe, I thought it might be interesting to start measuring the humidity, temperature and pressure in my apartment. To do so, I decided to use my Raspberry Pi 3 and the Pi Sense HAT running a Python script constantly sending measurements to an Azure Event Hub.